- Published on

AWS S3 Disruption — ME-CENTRAL-1: Two AZs Down, Lessons for Everyone

- Authors

- Name

- Hoang Nguyen

What Happened

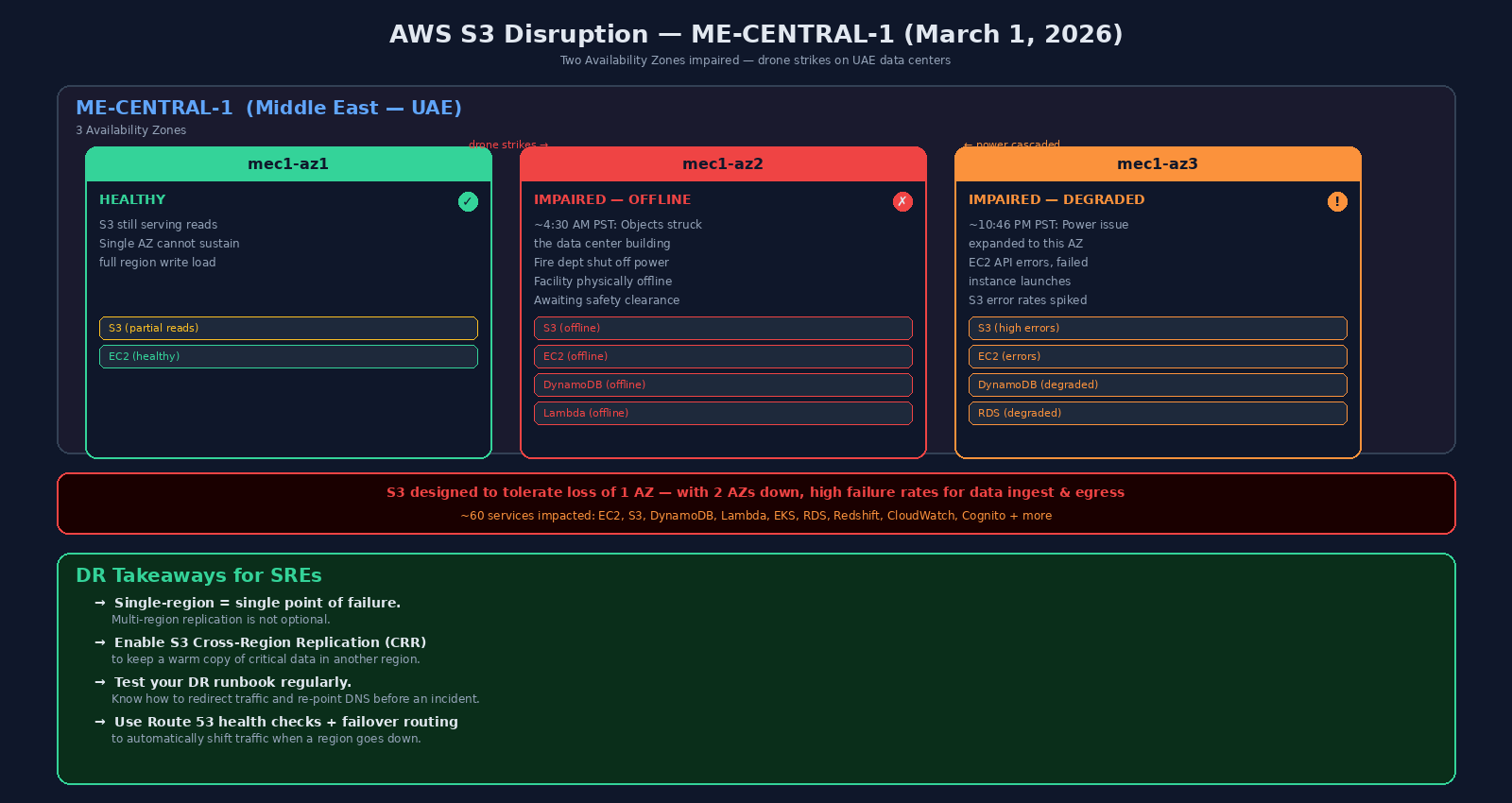

On March 1, 2026, two Availability Zones in the ME-CENTRAL-1 (Middle East — UAE) region went down, taking Amazon S3 and dozens of other AWS services with them. This was not a software bug or a misconfiguration. Physical objects — later confirmed by AWS as drone strikes — hit the data center buildings, sparking fires and forcing the fire department to cut power.

The ME-CENTRAL-1 region has three Availability Zones. Losing two of them at once pushed S3 past its design tolerance.

Timeline

| Time (PST) | Event |

|---|---|

| ~4:30 AM, Mar 1 | Objects struck the mec1-az2 facility, causing sparks and fire. Fire department shut off power and generators. |

| 5:19 AM, Mar 1 | AWS Health Dashboard reported connectivity and power issues affecting APIs and instances in mec1-az2. |

| ~10:46 PM, Mar 1 | Power issue expanded to mec1-az3. A second Availability Zone was now impaired. |

| Mar 2 onward | AWS continued recovery. mec1-az2 remained physically offline — engineers awaiting fire and safety clearance to re-enter the building. |

Why S3 Went Down

Amazon S3 is architected to tolerate the loss of a single Availability Zone. When only mec1-az2 went down, S3 continued operating normally — reads and writes were still being served from the remaining two AZs.

The moment mec1-az3 also became impaired, S3 lost its redundancy buffer. With two out of three AZs degraded, customers saw high failure rates for both data ingest and egress. PUT and LIST operations failed at elevated rates. Newly written objects could not be reliably retrieved.

What Else Was Impacted

At the peak of the incident, AWS listed nearly 60 services as affected:

- Offline or severely degraded: EC2, S3, DynamoDB, Lambda, EKS, Cognito

- Elevated errors: RDS, Redshift, CloudWatch, and more

- AWS Console and CLI were also disrupted in the region

The damage was not limited to ME-CENTRAL-1. AWS also reported connectivity and API errors in ME-SOUTH-1 (Bahrain), where a separate drone strike in close proximity caused a localized power issue in a single AZ, degrading 50+ services including EC2 and RDS.

Downstream, SaaS providers felt it too. Snowflake attributed service disruptions in the region to the AWS outage. Abu Dhabi Commercial Bank reported that its platforms and mobile app were affected.

How: AWS Recommendations

AWS issued clear guidance during the incident:

- Redirect S3 traffic to an alternate region immediately. Do not wait for recovery.

- Enact your disaster recovery plans. Recover from remote backups into alternate AWS regions.

- Operate out of alternate Availability Zones or Regions until full recovery is confirmed.

The Big Reminder

This incident drives home a point that every SRE has heard but not everyone has acted on:

Single-region architecture = single point of failure.

Multi-region replication and tested DR strategies are not optional. Here is the minimum checklist:

- Enable S3 Cross-Region Replication (CRR) to keep a warm copy of critical data in another region.

- Test your DR runbook regularly. Know how to redirect traffic and re-point DNS before an incident — not during one.

- Use Route 53 health checks + failover routing to automatically shift traffic when a region goes down.

- Avoid hard-coding a single region in your application config. Use environment variables or feature flags to switch regions quickly.

This was AWS's most significant outage since the US-EAST-1 incident in October 2025. But unlike a software bug that gets patched in hours, physical damage to a data center takes days to recover from. That changes the math on how much DR investment is "enough."

P/S: If your architecture only lives in one region and you're reading this thinking "it won't happen to us" — it already happened to AWS. In a region with three AZs. With two of them taken out by the same event. The question is not if, but when. Set up CRR this week.